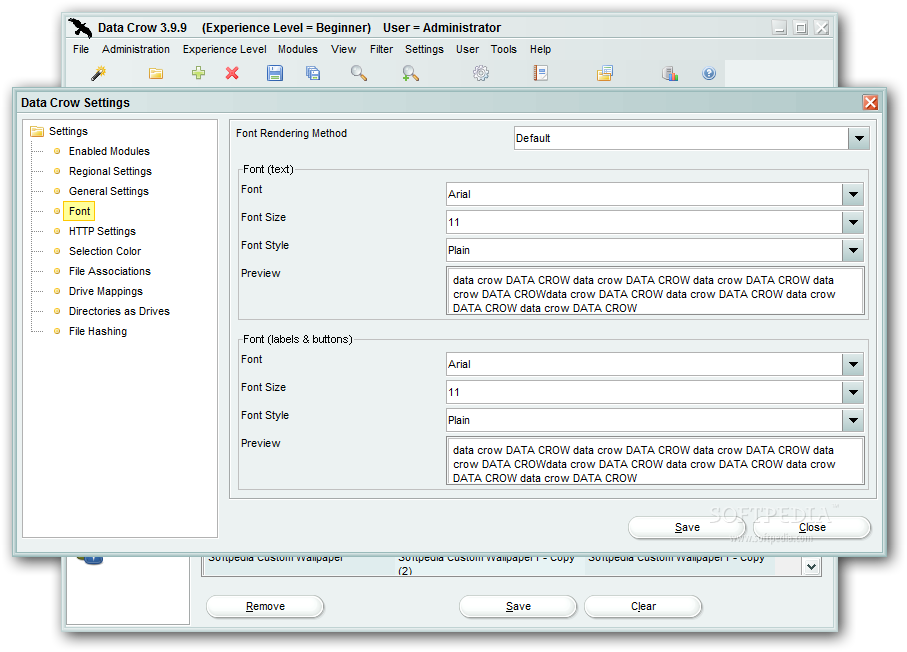

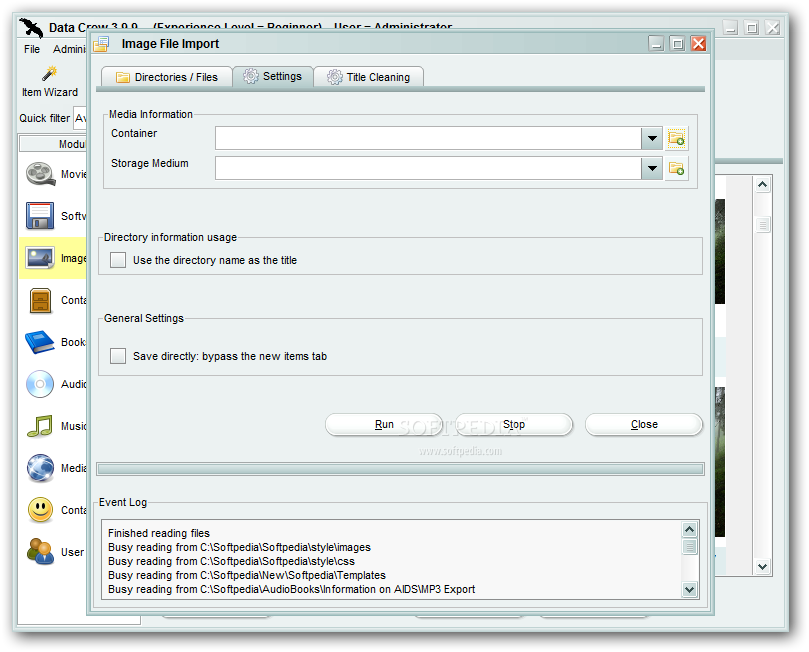

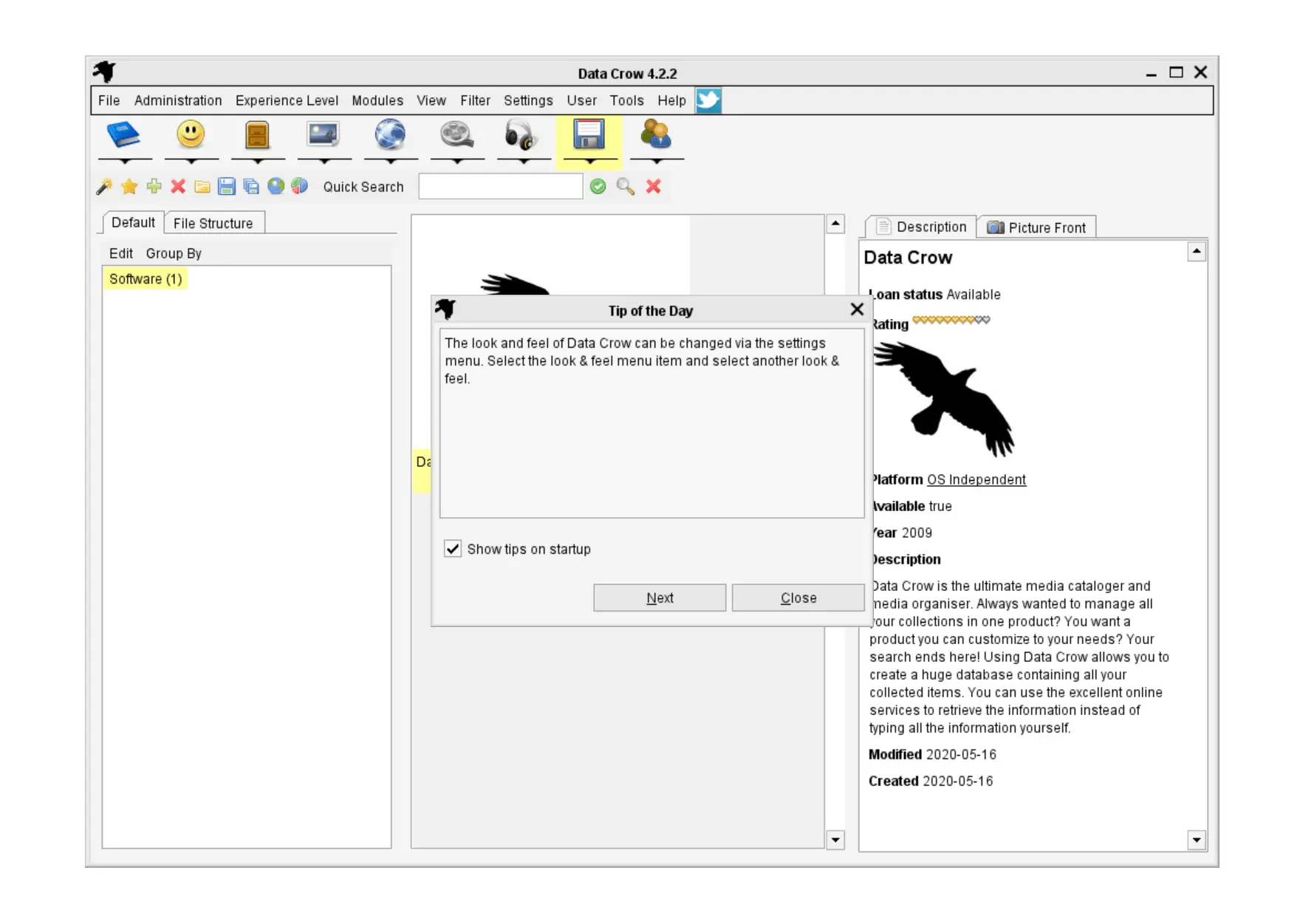

Tries to do a little too much, which results in a complicated interface that could definitely use tooltips. The lack of helpful tooltips was sorely missed throughout our time with Data Crow. Our major gripe is that there's no documentation on the project website and Data Crow only comes with a barely decent help function that you can access by clicking Help > Help. To do the latter, you'll need to enter your friend's details into the Contact Person collection, but then you can use the loan management feature to track which items are on loan and to who. Pleasingly, it can also create charts for each of your collections based on one of the data fields and keep track of what you've loaned. Only when nothing is found will you have to resort to typing in data manually.ĭata Crow can extract data directly from your music discs too, which makes cataloguing a music collection much simpler. Just enter some keywords or type in the ISBN number for a book and Data Crow will check online to find appropriate matches.

Surprisingly, there's no built-in module for comic books, though – a staple for many collection managers.įrom here on in, it's a case of building up your collections, and for this you can enlist the help of one of Data Crow's many handy wizards. The difference between the two is that beginners can't create new custom modules or edit the built-in modules, such as Books, Music, Films and so on. Don't worry too much about this choice: you can change your status from the Experience Menu at any time. When you run Data Crow for the first time, you'll be asked if you're a Beginner or an Expert user. zip, all you need to do to launch it is run

Once you've extracted the files from datacrow_3_4_12_zipped. At least Data Crow requires no installation. Note: If you created a requirements.txt file and your project spans multiple files, you can get rid of the requirements.txt file and instead, add all packages contained in requirements.txt to the install_requires field of the setup call (in step 1).While striving to be the top tool for cataloguing data, Data Crow has become quite a complex beast. Run your pipeline with the following command-line option: -setup_file /path/to/setup.py See Juliaset for an example that follows this required project structure. Structure your project so that the root directory contains the setup.py file, the main workflow file, and a directory with the rest of the files. The following is a very basic setup.py file.

To group your files as a Python package and make it available remotely, perform the following steps:Ĭreate a setup.py file for your project. When the remote workers start, they will install your package. To run your project remotely, you must group these files as a Python package and specify the package when you run your pipeline. Often, your pipeline code spans multiple files. The Beam documentation has a section on it. The issue is probably that you haven't grouped your files as a package. As a best practice, it does not include the. The module’s file path is used to pass in the custom utility as a dependency. How do I make custom modules available to all the dataflow workers? Please advise.īelow is an example: ImportError: No module named DataAggregationĪt find_class (/usr/lib/python2.7/pickle.py:1130)Īt find_class (/usr/local/lib/python2.7/dist-packages/dill/dill.py:423)Īt load_global (/usr/lib/python2.7/pickle.py:1096)Īt load (/usr/lib/python2.7/pickle.py:864)Īt load (/usr/local/lib/python2.7/dist-packages/dill/dill.py:266)Īt loads (/usr/local/lib/python2.7/dist-packages/dill/dill.py:277)Īt loads (/usr/local/lib/python2.7/dist-packages/apache_beam/internal/pickler.py:232)Īt apache_.PGBKCVOperation._init_ (operations.py:508)Īt apache_.create_pgbk_op (operations.py:452)Īt apache_.create_operation (operations.py:613)Īt create_operation (/usr/local/lib/python2.7/dist-packages/dataflow_worker/executor.py:104)Īt execute (/usr/local/lib/python2.7/dist-packages/dataflow_worker/executor.py:130)Īt do_work (/usr/local/lib/python2.7/dist-packages/dataflow_worker/batchworker.py:642) This example uses the define Object to include a native SuiteScript module and a custom module. In case of Dataflow runner, pipeline fails with module import error. On Local runner this works fine as all the available files are available in the same path. My Apache beam pipeline implements custom Transforms and ParDo's python modules which further imports other modules written by me.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed